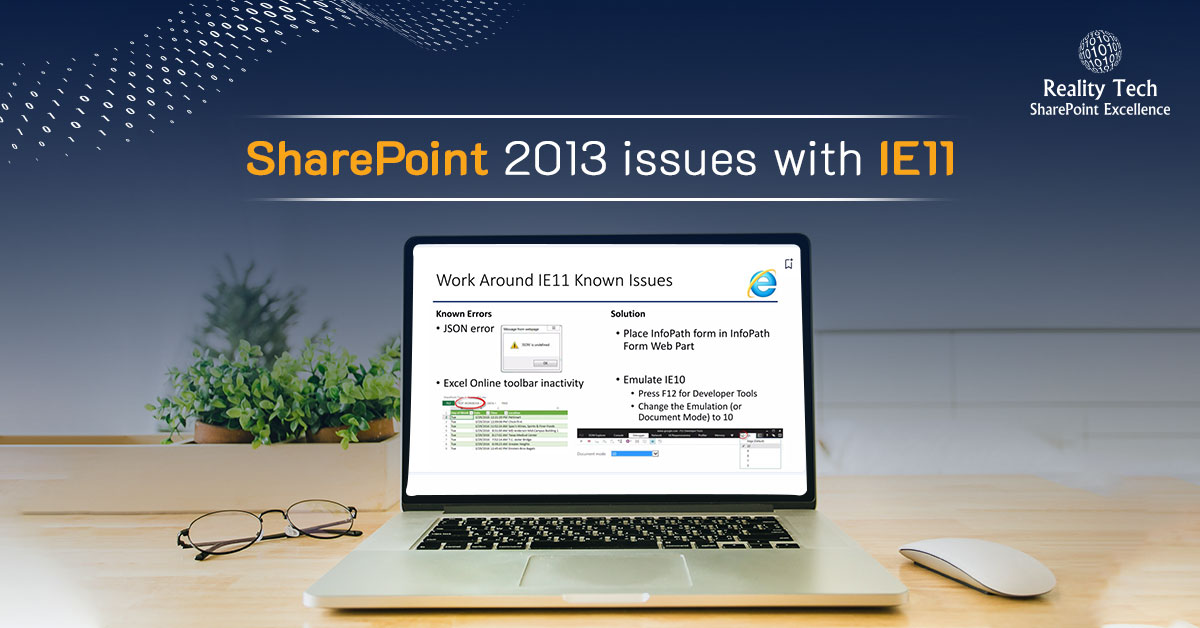

Working with IE11, Web Part pages could not be edited in the browser. Web parts could not be selected during creating the page, and the web part properties could not be presented for editing. The problem seems to be specific to IE11. It works great in IE10.

The solution is to set the hostname for the web part to run in Compatibility Mode.

To get it to work, here’s what I did:

1.Press ALT + T when viewing the SharePoint page

2.Click Compatibility View Settings in the menu

3.Click Add to add the current SharePoint site to the list of compatibility view pages

4.Click Close

That’s it. Not only does the page work, but other aspects of the page come back to life.

Happy browsing!